It is always important to get Googlebot crawl your site on a regular basis for better search engine visibility and ranking. When a Googlebot crawls our site it is quite natural for it to get responses such as 200, 404 etc. The crawl details for your site can be seen through your Google Webmasters account’s Crawl error section and subsequently you can fix any crawl errors that is show in the Google Webmasters tool.

In some cases there would be certain files or folders which we have intentionally blocked for Googlebot via the robots.txt file. In this case our Crawl errors section in the Google Webmasters tool will show us the crawl error messages. In some cases there might be certain files or folders which are prevented by the Googlebot from crawling unintentionally. In this case also we will get an error message in the Crawl Errors section.

By checking the Crawl error reports and fixing the issues we are making our website healthier. Checking the crawl error reports for both intentional and unintentionally blocked files every time is time consuming and as a result most webmasters never spend time on it and as a result may push their website into more danger.

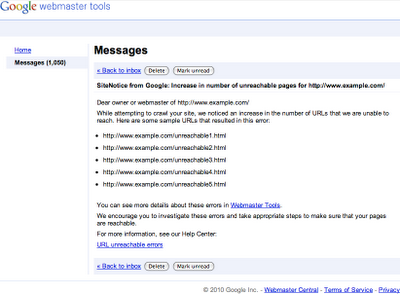

In their latest blog post Google has announced about the inclusion of a new feature in Webmasters tool to keep an eye on the Crawl error reports. As per its new feature it would be sending Site Notice messages whenever they detect a significant increase in the number of crawl errors in the report. The message helps the webmasters to take action against the crawl errors and the message contains samples of the URLs which are causing crawl errors or are not being able to be reached by Googlebot.

This is a sample image from the official Google Webmasters Blog and shows how the New SiteNotice looks like:

Good share George, Very useful …thank you